Why most operators are still building one-off automations

The new Workspace Agents feature is powerful, but most people are using it like they used custom GPTs: one task at a time, built when a pain point becomes obvious enough to act on. The result is a scattered collection of agents with no coherent relationship to each other. An agent that qualifies leads. An agent that writes recaps. An agent that repurposes content. Three separate things that don't know each other exist and can't coordinate when their outputs should inform one another's inputs.

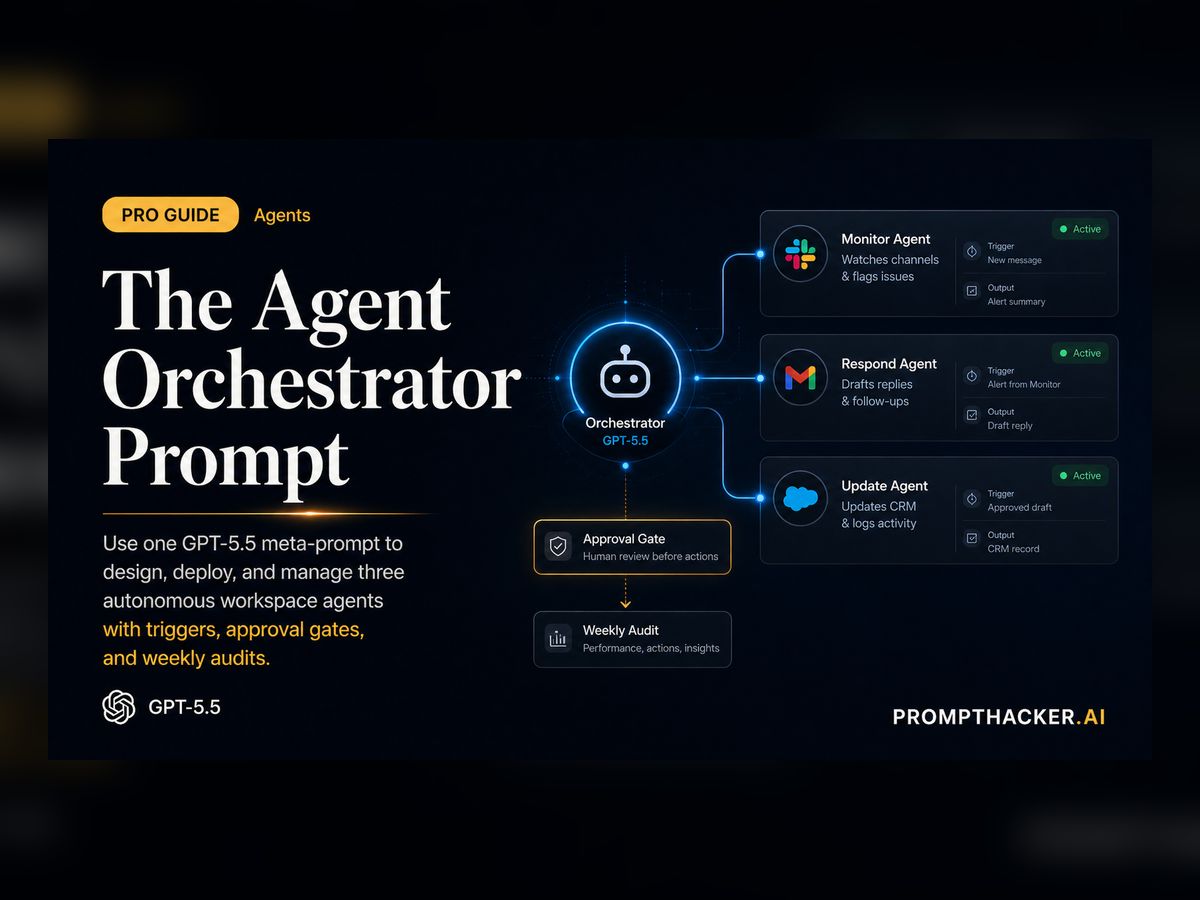

That is a waste of the new persistent memory and multi-tool orchestration capabilities that GPT-5.5 Workspace Agents actually support. The real power comes from treating GPT-5.5 as a meta-orchestrator - an agent that does not do any one task, but instead designs, deploys, and maintains an entire fleet of specialized agents for your business. One prompt to rule all the agents you actually need. That is what the Orchestrator prompt is for.

The distinction matters because one-off agents are built around your current awareness of what hurts. The Orchestrator is built around a full picture of your business priorities and bottlenecks. It finds the agents you didn't know you needed as much as the ones that were already on your list.

The Orchestrator Prompt (copy-paste ready)

You are a GPT-5.5 Workspace Agent Orchestrator with full access to my connected tools (Slack, Gmail, CRM, Drive, etc.) and persistent memory across sessions.

My business: [paste 2-sentence description + current top 3 priorities or bottlenecks].

Available integrations: [list your key tools].

Step-by-step:

1. Analyze the last 7 days of activity across my workspace and extract every open loop, decision, and opportunity.

2. Design 3 autonomous workspace agents (one per priority) with exact triggers, tool calls, approval gates, and success metrics.

3. Output for each agent:

* One-click activation prompt

* Weekly self-audit routine

* Escalation protocol

* Projected time/ROI savings

4. Deliver a master "orchestrator prompt" I can reuse weekly to coordinate all agents.

Format in clean sections with ready-to-copy blocks.The two blank fields in the prompt are the most important part. "My business: [2-sentence description + top 3 priorities]" forces you to articulate what actually matters right now, not what sounds impressive. The more specific you are here, the more targeted the agent designs will be. Vague business context produces generic agents. Sharp context produces agents that address your actual bottlenecks.

How to use it (step-by-step)

Open ChatGPT Enterprise and create a new Workspace Agent named "Orchestrator." Give it access to all your connected tools. The Orchestrator needs to be able to see across your workspace in order to identify the gaps and opportunities that individual agents will fill.

Paste the prompt above and fill in your business context and tool list. Do not rush this step. Spend five minutes writing the two-sentence business description. Include your current growth bottleneck explicitly. The Orchestrator will design agents around whatever you name as the constraint, so name the real one.

Run it once. It will output three complete agent definitions. Each definition includes the agent's goal, the tools it needs, the trigger (schedule or event), the approval gates, and the weekly self-audit routine. This output alone is worth the 20 minutes it takes to set up.

Copy each agent's activation prompt into its own new Workspace Agent. Create three new agents, one for each definition the Orchestrator produced. Name them clearly. Give each one exactly the tool access the Orchestrator specified and no more.

Activate all three. Review after 7 days using the weekly self-audit routine. The Orchestrator generated a self-audit prompt for each agent. Run that prompt at the end of week one to get a structured assessment of what worked, what didn't, and what the agent recommends changing for week two.

Real output example (from a founder who ran it on launch day)

A B2B SaaS founder with 12 employees ran the Orchestrator prompt on April 28 with this context: "We sell workflow automation to mid-market ops teams. Current bottlenecks: inbound lead response time is too slow, weekly reporting takes too long, and our content is not getting repurposed into LinkedIn reach." The Orchestrator produced three agents that directly addressed each bottleneck.

Agent 1 - Lead Qualification: Pulls new inbound leads from Gmail as they arrive, scores them on 10 criteria using CRM history and email content, and routes leads scoring above 7 to Slack with a one-paragraph summary and a suggested opener. Escalates only if score is above 8 and no sales rep has replied within 4 hours. Projected savings: 6 hours per week. Actual first-week result: average lead response time dropped from 4.2 hours to 18 minutes.

Agent 2 - Weekly Reporting: Runs every Monday at 8am. Pulls closed-won deals, support ticket volume, and cash runway from CRM and the finance tool. Produces a 6-slide Google Slides deck and posts a 3-bullet summary in the #exec Slack channel before the weekly standup. The founder's Monday morning changed from "build the report before the meeting" to "read the report during coffee." Projected savings: 4 hours per week.

Agent 3 - Content Repurposing: Takes long-form content (newsletters, podcast transcripts, recorded calls) from Drive each week, generates five LinkedIn post drafts and three email snippet options, and posts them in a Draft Review Slack channel. A human reviews and approves the batch before anything is published. Projected savings: 5 hours per week. First-week output included three posts that collectively got 12,000 impressions, the founder's best LinkedIn week in 2026.

Why this works better than one-off agents

Most people build agents reactively: "I need something to qualify leads" or "I need a recap agent." The Orchestrator forces you to think at the system level first by requiring you to name your top three bottlenecks before it designs anything. The result is a coherent fleet of agents that address your real operating constraints instead of a collection of useful-but-unrelated automations.

The self-audit routines are the second reason this approach compounds. Each agent reviews its own performance weekly and identifies what's working and what should change. That feedback loop is what turns a good agent into a great one over time. Without it, agents drift or stagnate. With it, they improve in direct proportion to the quality of data they process.

Pro move: After the first week, feed the Orchestrator's own output back into it with the line: "Now optimize the fleet based on actual performance data from the last 7 days." Include each agent's self-audit report in the prompt. The Orchestrator will rewrite the agent definitions with real usage data, tighter triggers, and better escalation thresholds. This is how you get compounding returns instead of a one-time productivity win.